17 Scripting

Prerequisites (read first if unfamiliar): Chapter 14, Chapter 16.

See also: Chapter 33, Chapter 31, Chapter 28.

Purpose

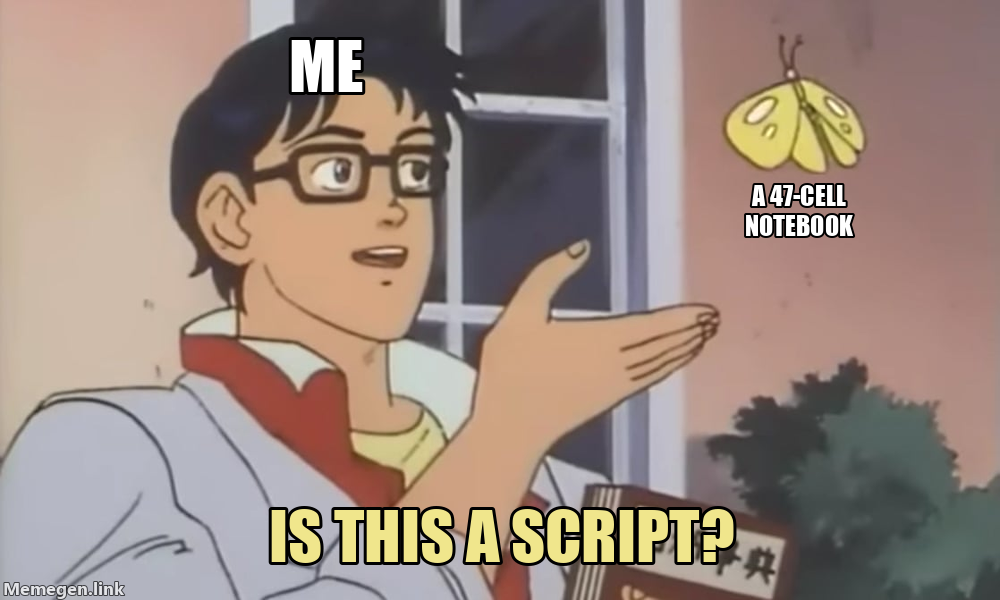

As students progress, the limiting factor is rarely “can you write code”; it is whether your work can be reused, rerun, and explained. Scripts complement notebooks by making work repeatable from the command line and easier to automate, test, and share. This chapter teaches how to write simple scripts, import your own code into notebooks, translate notebooks into scripts, pass parameters from the command line, and make deliberate notebook-versus-script choices.

Learning objectives

By the end of this chapter, you should be able to:

Write a Python script that can be run from the command line and imported as a module.

Organize reusable code into functions and (optionally) a small

src/module.Import custom scripts into a Jupyter notebook and reload changes during iteration.

Convert notebooks to scripts (and scripts to notebooks) using standard tools.

Pass parameters into scripts using a command-line interface (CLI) pattern.

Explain trade-offs: when a notebook is the right tool and when a script is.

Use a “notebook as narrative, script as engine” pattern to keep work reproducible.

Running theme: separate logic from presentation

The logic (data loading, cleaning, analysis) should live in importable functions. The notebook (or script) should orchestrate and explain.

17.1 Mental models and vocabulary

Script, module, package

Python has three words for “a file with Python code in it,” and they are not interchangeable — each describes a different role the same file can play. A script is a file you run from the command line, like python analyze.py. A module is a file you import from other code, like import analysis. A package is a directory of modules that can be imported as a unit, usually with an __init__.py file inside it that tells Python “this folder is importable.”

The subtle part is that the same .py file can be all three at once. A file called cleaning.py that has a few functions and a if __name__ == "__main__": block at the bottom is a module when you import it (from cleaning import clean_sales), a script when you run it (python cleaning.py), and part of a package if you put it inside a folder with an __init__.py. Understanding this is what lets you build a small library of reusable functions that also happen to work as command-line tools — you do not have to choose.

my-project/

├── cleaning.py # a script (runnable) AND a module (importable)

├── src/

│ ├── __init__.py # makes src/ a package

│ ├── loader.py # module inside the package

│ └── plotting.py # another module inside the package

└── notebooks/

└── exploration.ipynbFrom a notebook or another script, you can then from src.loader import load_csv or from src.plotting import make_histogram, and the shared functions live in exactly one place.

Entry point vs library code

Inside any non-trivial script or package, it is worth separating two kinds of code: library code and entry-point code. Library code is the functions and classes that actually do the work — clean_sales(df), train_model(x, y), plot_distribution(values). Each one takes some inputs and returns some outputs, and each one is testable, importable, and reusable in isolation. It knows nothing about where its inputs came from or what the user asked for on the command line.

Entry-point code is the glue that connects library code to the outside world. It reads command-line arguments, opens files, calls the library functions in the right order, and writes the outputs somewhere. The entry-point code is the only place that should know things like “the user typed --input data/raw/survey.csv” or “the output goes to data/processed/.” If you keep this boundary clean, swapping a new entry point (like a web API or a batch job) onto the same library is almost free.

# Library code (reusable, testable, zero knowledge of the command line)

def clean_sales(df):

df.columns = df.columns.str.strip().str.lower()

return df.dropna(subset=["customer_id"])

# Entry point (argparse, file paths, orchestration)

def main():

args = parse_args()

df = pd.read_csv(args.input)

cleaned = clean_sales(df) # library call

cleaned.to_csv(args.output, index=False)The heuristic is: if a function depends on sys.argv, on a hardcoded path, or on the user’s working directory, it is entry-point code. If it takes its inputs as arguments and returns its outputs as return values, it is library code. Keep them in separate functions, even inside the same file.

Working directory and paths

The single most surprising thing about moving code between a script and a notebook is that the meaning of a relative path depends on where you launched Python from. A script that reads data/raw/survey.csv works fine when you run python src/clean.py from the project root, and then fails with FileNotFoundError the moment you run the same file from inside the src/ folder. The path did not change; the working directory did.

The habit that makes this a non-issue is to always run your code from the project root and write every path relative to that root. The project root is the folder that contains README.md, data/, src/, notebooks/ — the one that looks, from the outside, like “the project.” As long as you cd there before running anything, the same relative paths behave the same way everywhere.

$ cd ~/Courses/INFO-3010/Project # project root

$ python src/clean.py # data/raw/... resolves correctly

$ jupyter lab # notebooks also see data/raw/...For scripts that need to be runnable from anywhere (for example, scheduled jobs), the trick from Chapter 10 still applies: Path(__file__).resolve().parent.parent gives you the script’s own folder, and from there you can walk up to the project root without depending on the caller’s CWD. The general principle is the same either way: pick an anchor once, and resolve every path relative to it.

17.2 Writing scripts: the essentials

A minimal Python script has a predictable shape: imports at the top, then constants and configuration, then the functions that do the work, then a main() function that orchestrates them, and finally the if __name__ == "__main__": block at the bottom that runs main() when the file is executed as a script.

"""Clean and summarize the Q3 sales CSV."""

import sys

from pathlib import Path

import pandas as pd

INPUT = Path("data/raw/sales.csv")

OUTPUT = Path("data/processed/sales_clean.csv")

def clean(df):

df.columns = df.columns.str.strip().str.lower()

df["amount"] = pd.to_numeric(df["amount"], errors="coerce")

return df.dropna(subset=["amount"])

def main():

df = pd.read_csv(INPUT)

cleaned = clean(df)

cleaned.to_csv(OUTPUT, index=False)

print(f"wrote {len(cleaned):,} rows to {OUTPUT}")

if __name__ == "__main__":

main()The reason that little if __name__ == "__main__": line at the bottom matters is that it lets the same file work as both a script and an importable module. When you run python clean.py from the command line, Python sets the special variable __name__ to "__main__" and the body of the if runs — calling main(), doing the work. When some other file does from clean import clean to import just the function, Python sets __name__ to "clean" and the main() call does not run. This pattern is the difference between a script that you can only run one way and a script that other people (and your future notebooks) can also import the useful pieces of.

Inside main(), the script’s job is to take some inputs (file paths, URLs, parameters) and produce some outputs (cleaned CSVs, figures, summaries, logs). A good habit is to write outputs to predictable folders — data/processed/ for cleaned tables, figures/ for plots, reports/ for finished artifacts — so your collaborators (and future you) always know where to look.

For small status messages while a script is running, print() is fine — “loaded 42,103 rows,” “starting cleaning step.” For longer-lived scripts, especially anything that runs on a schedule or that you want to debug after the fact, switch to the standard library logging module instead. Logging gives you levels (INFO, WARNING, ERROR), timestamps, and the ability to send output to a file. The simplest possible setup is:

import logging

logging.basicConfig(level=logging.INFO,

format="%(asctime)s %(levelname)s %(message)s")

log = logging.getLogger(__name__)

log.info("loaded %d rows", len(df))Stick with print for course assignments; reach for logging when you start writing scripts that other people will depend on or that run unattended.

17.3 Organizing reusable code: from one file to src/

From monolith to functions

Most student code starts life as a single long notebook or script where every line runs in order. That is fine for the first week of a project — the fastest way to understand a new dataset is to write straight-line code and watch what happens. But once the same block starts appearing in two cells, or in two scripts, you are paying for the duplication in ways that compound over time: every bug has to be fixed twice, every improvement has to be applied twice, and any divergence between the two copies becomes a mystery that eats an afternoon.

The fix is to notice the duplication and extract it into a function. A block that lowercases column names and drops missing rows can become a clean_columns(df) function. A block that loads a CSV and parses dates can become a load_sales(path) function. Each extraction is a tiny refactor, and each one replaces many lines of repeated code with a single call.

# Before: the same six lines appear in three cells and one script

df.columns = df.columns.str.strip().str.lower()

df["date"] = pd.to_datetime(df["date"], errors="coerce")

df["amount"] = pd.to_numeric(df["amount"], errors="coerce")

df = df.dropna(subset=["customer_id", "date", "amount"])

df = df[df["amount"] > 0]

df = df.reset_index(drop=True)

# After: one function, called from anywhere that needs it

def clean_sales(df: pd.DataFrame) -> pd.DataFrame:

df.columns = df.columns.str.strip().str.lower()

df["date"] = pd.to_datetime(df["date"], errors="coerce")

df["amount"] = pd.to_numeric(df["amount"], errors="coerce")

df = df.dropna(subset=["customer_id", "date", "amount"])

df = df[df["amount"] > 0]

return df.reset_index(drop=True)The second piece of advice is to make each extracted function pure whenever you can: inputs come in through parameters, outputs go out through the return value, and the function does not read or modify any global state. A pure function is easy to test (just pass it inputs and check the output), easy to reuse (the answer depends only on what you passed in), and easy to reason about (there is no hidden context to track). Not every function can be pure — some have to read a file or write a plot — but the closer you get, the more pleasant the code is to work with.

A simple project layout

Once you have a handful of extracted functions, they need a home. A standard layout has emerged for data-focused Python projects, and adopting it early saves you from the “where does this go?” tax every time you add a new file:

my-project/

├── README.md # what, why, and how to run it

├── notebooks/ # narrative exploration (one per question)

│ └── 01-explore.ipynb

├── src/ # reusable Python modules

│ ├── __init__.py

│ ├── cleaning.py

│ └── plotting.py

├── scripts/ # CLI entry points that import from src/

│ └── run_cleaning.py

├── data/

│ ├── raw/ # immutable inputs

│ └── processed/ # rebuildable outputs

└── figures/ # generated plotsEach folder has one job. notebooks/ is for exploration and narrative — the messy cells where you think out loud. src/ is for functions that more than one notebook or script depends on — the pure logic. scripts/ is for command-line entry points that orchestrate src/ functions, the stuff you actually run on a schedule or against a batch of files. data/raw/ is read-only, data/processed/ is rebuildable, and figures/ collects the plots you want to share. The README.md at the top explains what the project does and how to run it.

The power of this layout is that it tells a reader — including future you — where to look for every kind of content without having to ask. “Where’s the cleaning logic?” src/cleaning.py. “How do I run it from the command line?” scripts/run_cleaning.py. “Where are the results?” data/processed/. The answers are never in doubt.

Import paths without pain (student-safe guidance)

Imports cause more suffering for beginners than almost anything else in Python, and the reason is almost always the same: people try to run scripts and notebooks from wherever they happen to be, instead of from a single predictable place. The single habit that eliminates almost every import error is always run your code from the project root, the folder that contains src/, notebooks/, data/, and README.md.

When you run python scripts/run_cleaning.py from the project root, Python adds the current directory to the front of its search path for imports, which means from src.cleaning import clean_sales just works — src/ is visible because it lives right there in the current directory. When you run the same file from inside scripts/, src/ is no longer visible from the working directory, and the import fails. Same code, same file, different place you were standing — different result.

# Works: project root is on the Python path

$ cd ~/Courses/INFO-3010/Project

$ python scripts/run_cleaning.py

# Fails: src/ is no longer visible

$ cd ~/Courses/INFO-3010/Project/scripts

$ python run_cleaning.py

ModuleNotFoundError: No module named 'src'The second habit is to stop copy-pasting code between notebooks. Every time you find yourself opening two notebooks side-by-side to copy the same cleaning cell from one into the other, that is a function begging to be extracted into src/ and imported from both places. The copy-paste feels faster in the moment, but it costs you every time one of the copies needs to change — and since you are the one doing the copying, you are the one who pays the cost.

The third is a mindset shift: when imports are confusing, treat it as a project-structure problem, not a magic-command problem. The temptation is to reach for sys.path.insert(0, "../..") or %load_ext autoreload or other incantations to force imports to work in the current layout. These sometimes help, but they are almost always patching a symptom. If you find yourself doing them, step back and ask whether the project layout matches the one above — whether you have a clean project root you are running from, whether src/ is a real folder with an __init__.py, whether you are launching your notebook server from the root. Nine times out of ten, the fix is a structural one, and the magic commands become unnecessary.

17.4 Loading custom scripts into notebooks

The basic pattern: import your code

Once your reusable logic lives in src/, a notebook can pull it in with a normal Python import. The workflow is almost boring in its simplicity: launch Jupyter from the project root, open a notebook, and import your functions the same way you would import anything from the standard library.

# notebooks/01-explore.ipynb, first code cell

from pathlib import Path

import pandas as pd

from src.cleaning import clean_sales

from src.plotting import plot_monthly_revenue

df = pd.read_csv("data/raw/sales.csv")

cleaned = clean_sales(df)

plot_monthly_revenue(cleaned)Notice what the notebook is doing and what it is not doing. It orchestrates the work — loads the data, calls the cleaning function, calls the plotting function — and it provides the narrative around those calls (markdown cells explaining what you are looking at and why). What it does not contain is the ten-line body of clean_sales or the fifteen-line body of plot_monthly_revenue. Those live in src/, where they can be reused, tested, and improved in one place.

The rule of thumb for a well-structured notebook is that no single code cell should be more than ten or fifteen lines. If a cell is growing past that, it is usually hiding a function that wants to be extracted. Move the logic to src/, import it back, and the notebook becomes easier to read and dramatically easier to reuse.

Iterating on imported code: reloading

The first time you edit a function in src/cleaning.py and then re-run the notebook cell that calls it, you will notice something surprising: the cell still runs the old version of the function. This is not a bug; it is how Python imports work. When the notebook imports clean_sales, Python loads src/cleaning.py once and caches the result in memory. Subsequent imports of the same module return the cached version, so your edits to the source file do not automatically appear in the running kernel.

There are two ways to deal with this, and both have their place. The heavy-handed option is to restart the kernel (Kernel → Restart) and re-run all cells. This is the most reliable — you are guaranteed to pick up the latest version of every module — but you lose any state you had built up in the notebook, which can be annoying if the data load was slow. The lightweight option is to ask Python to reload the specific module you just edited:

import importlib

from src import cleaning

importlib.reload(cleaning) # pick up edits to src/cleaning.py

from src.cleaning import clean_sales # re-import the updated function

df = clean_sales(pd.read_csv("data/raw/sales.csv"))Jupyter also offers a convenience called autoreload that does this for you automatically. In the first cell of a notebook, run:

%load_ext autoreload

%autoreload 2From then on, every time you run a cell, Jupyter checks whether any imported modules have changed on disk and reloads them before running your code. This is the right setup for an exploratory notebook where you are iterating rapidly between editing src/ and trying things out. One caveat: autoreload occasionally gets confused when you rename a function or change its signature, and the fix is to restart the kernel and re-run. Do not over-rely on autoreload in a notebook you are about to hand in — run it one last time with a clean kernel before you submit.

Alternative: run a script from a notebook

Sometimes you do not want to import a script — you want to run it as an external process, the same way you would from the terminal. Jupyter makes this easy with the ! prefix, which hands the line off to the shell:

!python scripts/run_cleaning.py --input data/raw/sales.csv --output data/processed/sales_clean.csv

!ls -lh data/processed/This is occasionally the right tool — for example, when you want to demonstrate a complete end-to-end run of a pipeline inside a notebook narrative, or when the script is written in a language other than Python. But it comes with a trade-off worth understanding: the script runs in a separate process, so anything it computes is not available to the notebook’s Python kernel. If run_cleaning.py builds a DataFrame and then exits, that DataFrame is gone — the only way to get the results is to read the output file the script wrote.

The consequence is that running a script from a notebook can create confusing state splits, where the notebook has variables that exist only in the kernel and the script has variables that exist only in its own process, and the two never see each other. For exploratory work, it is almost always cleaner to import the script’s functions into the notebook and call them directly — the result is a single Python process, a single memory space, and no state surprises.

Notebook “smoke test” cell

A habit worth adopting for any notebook that depends on imported code is a smoke test cell near the top — a small diagnostic block that runs before any real work and confirms the environment is sane. The goal is to catch the common setup problems (wrong kernel, wrong working directory, missing data, missing module) immediately, with a clear message, before they show up as confusing errors five cells later.

# Smoke test: run this cell first

import sys, os

from pathlib import Path

print("python: ", sys.executable)

print("cwd: ", os.getcwd())

print("project: ", Path.cwd().name)

# Check required files exist

for rel in ["data/raw/sales.csv", "src/cleaning.py"]:

p = Path(rel)

print(f"{'OK' if p.exists() else 'MISSING':8s} {rel}")

# Import key modules and print versions

import pandas as pd

from src import cleaning

print("pandas: ", pd.__version__)

print("cleaning module:", cleaning.__file__)When the smoke test prints the path of a Python interpreter that is not your project’s virtual environment, you know the kernel is wrong. When cwd is not the project root, you know the notebook was launched from the wrong place. When a required file shows up as MISSING, you know to download it before running the analysis. Each of these is a thirty-seconds fix when you catch it here and a half-hour detour when you catch it later. Make the smoke test cell the first cell of every notebook that depends on src/, and you will save yourself the detours.

17.5 Passing parameters from the command line

Why parameterize scripts

Make the same analysis run on different datasets or time ranges.

Enable automation (scheduled runs, batch processing).

Reduce copy/paste “variant” scripts.

Three levels of parameterization

Parameterization comes in three flavors that scale with how serious the project is. The simplest level is constants in the script itself: you put INPUT = "data/q3.csv" near the top and edit it whenever you need a different dataset. This is fast and fine for one-off work; it does not scale to running the same script against many inputs without becoming a copy-paste mess. The next level is a config file — a small .yml or .toml file that holds parameters and that the script reads at startup. Config files are good when several teammates need to adjust parameters without editing code. The most flexible level is command-line arguments, where parameters are passed when the script is invoked. CLI scripts are the right shape for automation, scheduled runs, and any workflow where the same code needs to run with different inputs.

The CLI pattern

The standard way to give a Python script command-line arguments is the built-in argparse module. You define each argument once, with a description and (where appropriate) a default value, and argparse handles parsing the user’s input, generating a --help message, and rejecting invalid arguments before your code ever runs.

import argparse

from pathlib import Path

def parse_args():

p = argparse.ArgumentParser(description="Clean a sales CSV.")

p.add_argument("--input", type=Path, required=True,

help="path to the raw CSV")

p.add_argument("--output", type=Path, required=True,

help="where to write the cleaned CSV")

p.add_argument("--seed", type=int, default=0,

help="random seed for reproducibility")

return p.parse_args()Now python clean.py --input data/raw/q3.csv --output data/processed/q3_clean.csv works, and python clean.py --help prints a friendly summary of every option.

Validating inputs

A good habit is to fail fast when an input is wrong, with a message that tells the user what to do. Check that input paths exist before you try to read from them. Validate that numeric parameters are in sensible ranges (a sample size cannot be negative, a percentage must be between 0 and 100). And produce clear error messages, not stack traces — argparse.ArgumentTypeError is the canonical way to do this:

def main():

args = parse_args()

if not args.input.exists():

raise SystemExit(f"input not found: {args.input}")

if args.seed < 0:

raise SystemExit(f"seed must be non-negative, got {args.seed}")

...The script aborts immediately with a one-line message instead of running for ten minutes and then crashing on a missing file.

Reproducibility and auditability

Every parameterized script should print or log its resolved parameters at startup, so that anyone reading the output later can tell exactly what configuration produced it. The same goes for outputs: write them to a path that includes the relevant parameters (a date, a seed, an input filename) so you can tell two runs apart at a glance. And record the exact command used to run the script in a README.md or a metadata file alongside the output, so someone can reproduce the run six months from now without guessing.

def main():

args = parse_args()

print(f"INPUT={args.input} OUTPUT={args.output} SEED={args.seed}")

...Three small habits, almost no extra effort, and your work is dramatically more reproducible.

17.6 Translating notebooks into scripts (and vice versa)

Why convert

Once a notebook has grown past the exploration stage and the analysis has stabilized, there are three good reasons to turn it into a script (or into a pair of script + thinner notebook). The first is automation: scripts are trivial to run unattended, from a shell, from a scheduled job, from a CI pipeline. You do not need a browser or a running kernel to execute a .py file. If the same analysis needs to run every Monday morning or against every new data drop, it needs to be a script.

The second is version control hygiene. Notebooks serialize as JSON, with every cell output — including large images — embedded in the file. A simple edit that changes the order of two plots can produce a 4,000-line diff in the raw .ipynb file, most of which is base64-encoded PNG data. Scripts, being plain text, produce diffs that match what a human actually changed. Code reviewers can actually read them. Merge conflicts in scripts are normal three-way merges; merge conflicts in notebooks are usually insoluble without special tools. (Chapter 31 has more on this.)

The third reason is diagnostic. Converting a notebook to a script is one of the best ways to surface hidden-state problems — cells that depend on a variable defined in another cell that is no longer in the notebook, cells that rely on being run in a specific order, cells that only work after a manually-run setup step. A script runs top to bottom, with no cached kernel state to rescue it, so any dependency that was only working by accident will fail loudly. The first pass of turning a messy notebook into a script is sometimes the first honest test of whether the notebook actually does what it claims.

One-way conversion: notebook to script

The standard tool for turning an .ipynb into a .py is jupyter nbconvert, which ships with Jupyter itself. One command produces a script:

jupyter nbconvert --to script notebooks/analysis.ipynb \

--output-dir scripts/The result is a file called scripts/analysis.py that contains every code cell from the notebook, concatenated in order, with markdown cells converted to # Title-style comments. That file is almost never a finished script on its own. It still has the df.head() calls you only ran to peek at the data, the commented-out experiments that were there just in case, and the long block of imports that used to be spread across ten different cells. Treat the conversion as a starting point, not a deliverable:

# 1. Convert

jupyter nbconvert --to script notebooks/analysis.ipynb --output-dir scripts/

# 2. Open the result and refactor

# - delete interactive inspection calls (df.head(), df.info())

# - extract repeated blocks into functions

# - wrap the main flow in a main() function

# - add a if __name__ == "__main__": guard

# - add argparse if you want CLI parameters

# 3. Run it end-to-end from a clean shell to confirm it still works

python scripts/analysis.pyBy the end, you have a script that does the same thing the notebook did, but as a single reliable artifact that can be scheduled, tested, and version-controlled cleanly. The original notebook stays in notebooks/ if you want to keep it as narrative; the script is what you actually run.

Round-trip conversion: paired notebooks

Sometimes you want the narrative affordance of a notebook and the version-control cleanliness of a script, without maintaining two separate files. The tool for that job is Jupytext, which lets you pair any notebook with a plain-text representation — typically a .py file in “percent” format — and keeps the two in sync automatically whenever you save either one.

notebooks/

├── analysis.ipynb # the rendered notebook (outputs, plots)

└── analysis.py # the paired text version (editable in any editor)The .py version is a regular Python file with special comments marking where each cell starts:

# %% [markdown]

# # Sales analysis

# Exploration of Q3 sales data.

# %%

import pandas as pd

from src.cleaning import clean_sales

# %%

df = pd.read_csv("data/raw/sales.csv")

cleaned = clean_sales(df)You get clean git diffs (edit the .py, the notebook follows), better merge behavior, and the option to edit cells in VS Code or Vim when that is more convenient than the browser. Meanwhile the .ipynb is still a real notebook you can open in Jupyter and use for narrative work. Commit both files to version control, or commit only the .py and regenerate the .ipynb when needed — either works.

Jupytext is an install (pip install jupytext) and a one-time pair command per notebook, and then it just runs in the background. For any project that both uses notebooks heavily and cares about code review, it is a small cost for a large payoff.

Parameterizing notebooks (bridge to automation)

There is a middle path between “notebook forever” and “convert to a script”: keep the notebook, but run it programmatically with different parameters each time. This is how you generate a monthly report from a single template, or produce the same analysis for fifty different cities, without maintaining fifty copies. The tool is Papermill, which executes a notebook from the command line with parameters injected into a designated cell.

The setup is a two-step ritual. First, tag a code cell in your notebook with the tag parameters (in Jupyter Lab: click the gear icon in the right sidebar, then Cell Tags, then add parameters). That cell should contain the default values for any parameter you want to override:

# Cell tagged `parameters`

input_file = "data/raw/sales.csv"

output_file = "data/processed/summary.csv"

start_date = "2026-01-01"Then run the notebook with Papermill, passing new values on the command line:

papermill notebooks/analysis.ipynb outputs/analysis_q3.ipynb \

-p input_file data/raw/sales_q3.csv \

-p output_file data/processed/summary_q3.csv \

-p start_date 2026-07-01Papermill runs the notebook end-to-end, injects your parameters as if they had been typed into the parameters cell, and writes a new executed notebook with the results and outputs baked in. This is the right tool when the final artifact is supposed to be a human-readable notebook — a monthly report, a per-experiment writeup, a gallery of results — and what changes between runs is only a handful of inputs. For pipelines where the output is pure data, a plain script is usually the better choice.

17.7 Notebook versus script: trade-offs and decision rules

Notebooks are best for

Notebooks shine when the primary goal is exploration, learning, or communication. They are the right tool when you are still figuring out what your data looks like, when you want to iterate rapidly between code and visualization, and when the final artifact is a narrative analysis with plots and commentary that someone else will read. The mix of code, prose, and inline output is what makes a notebook a document, and that is what scripts cannot do well.

Scripts are best for

Scripts shine when the primary goal is repeatable execution. The same code, applied to many inputs, on a schedule, possibly in CI, with predictable logs and outputs that go to predictable locations. Anything that needs to run unattended — at 3 AM as a cron job, on every push to GitHub, in a batch over a hundred files — should be a script. Scripts also produce dramatically cleaner diffs in version control than notebooks (which serialize as JSON with embedded outputs), which makes them easier to code review.

A common hybrid pattern

In practice the right shape for a serious project is usually neither pure-notebook nor pure-script, but a layered hybrid. The reusable computational logic — cleaning functions, modeling utilities, plot helpers — lives in src/ as plain Python modules with no notebook ceremony. CLI entry points for batch runs live in scripts/, importing from src/. And one or more notebooks in notebooks/ orchestrate the analysis and provide the narrative, also importing from src/. The notebook is the story; the scripts are the engine; src/ is the shared library that both depend on. With this structure, the same cleaning function gets exercised both in the interactive notebook and in the unattended pipeline, so you do not have two slightly-different copies that drift apart.

When to move from a notebook to a script

There are clear smells that signal “this notebook should become (or call) a script.” If you find yourself manually rerunning the same notebook with small parameter changes, you want a script with arguments. If the notebook takes long enough that you want to run it overnight, you want a script. If the work needs to happen on a schedule, in CI, or as a step in a pipeline, you want a script. And if you find yourself wishing for proper logs, retries, or consistent file outputs, that is the moment to extract the logic.

The complementary smells say “this should stay in a notebook”: the primary goal is interpretation or communication, the analysis is still exploratory and changing quickly, or you are teaching or documenting reasoning rather than producing a deliverable. Notebooks are the wrong tool for production but the right tool for thinking; do not let “scripts are more professional” push you out of a notebook when a notebook is what you actually need.

17.8 Best practices: making scripts and notebooks play well together

Make data and paths explicit

The rule that protects every other rule is: never hardcode an absolute path in code that other people will read or run. A line like df = pd.read_csv("/Users/alex/Downloads/survey.csv") works on exactly one machine, until the day Alex moves the file. Any collaborator — including you on a new laptop six months from now — gets a FileNotFoundError the moment they try to run it.

The alternative is a stable project root and consistent relative paths inside it. Pick one directory that contains data/, src/, notebooks/, and a README.md; treat that directory as the canonical “project root”; and write every data-reading line as a path relative to that root. Both your notebooks and your scripts should assume they are being run from the root, and both should read the same files from the same locations.

# src/paths.py — one place for every path the project uses

from pathlib import Path

PROJECT_ROOT = Path(__file__).resolve().parent.parent

DATA_RAW = PROJECT_ROOT / "data" / "raw"

DATA_CLEAN = PROJECT_ROOT / "data" / "processed"

FIGURES = PROJECT_ROOT / "figures"Now any script or notebook can from src.paths import DATA_RAW and use DATA_RAW / "survey.csv" without ever writing an absolute path by hand. If the project moves, the only thing that needs to change is the one file that defines the root. The rest of the code is automatically portable.

A small supporting habit: write a tiny check_paths() function that asserts the expected files exist and call it at the top of every script and the top of every notebook. If anything is missing, you see a clear error immediately, instead of fifteen minutes into a run.

Document how to run

Any project that anyone else might need to run, including future you, deserves a section in README.md titled “How to run it” that contains an exact, copy-pasteable recipe. Not “activate the environment and run the analysis” — the actual commands, in order, that a fresh reader on a fresh machine would need to execute.

## How to run

1. Create and activate the environment:

```bash

python -m venv .venv

source .venv/bin/activate # on Windows: .venv\Scripts\activate

pip install -r requirements.txtPlace the raw data at

data/raw/sales.csv(download link: …).Run the cleaning pipeline:

python scripts/run_cleaning.py \ --input data/raw/sales.csv \ --output data/processed/sales_clean.csvOpen the analysis notebook:

jupyter lab notebooks/01-explore.ipynb

Expected runtime: ~30 seconds for cleaning, ~2 minutes for the notebook. Expected outputs: data/processed/sales_clean.csv (~5 MB).

The exact commands protect you from the classic "I just have to type this thing I remember from last month" failure mode. The expected runtime and outputs give the reader a sanity check: if the cleaning step takes five minutes instead of thirty seconds, or the output is 50 KB instead of 5 MB, something is wrong. A good "how to run" section is often the highest-leverage documentation you can write — it is what gets other people unblocked without asking you a question.

### Version control discipline

Notebooks are a known pain point in version control (see @sec-git-github), and a few small habits keep them from taking over your repository. First, **clear cell outputs before committing.** A notebook with 200 inline plots serializes to a file that is mostly base64 PNGs, which bloats the repo and makes diffs unreadable. Most editors can be configured to clear outputs on save; at the very least, run **Cell → All Output → Clear** before you commit a notebook you are checking in for other people to review.

Second, **avoid embedding large data into the notebook itself.** A cell that reads a 50 MB CSV and then does `df` on a line by itself will embed every row into the notebook's output. Either slice the output (`df.head()`, `df.sample(10)`) or print a summary (`df.shape`, `df.describe()`) instead. Raw data belongs in `data/`, not in `notebooks/`.

Third, **prefer Jupytext pairing or script conversion for anything code-reviewed.** If a reviewer has to compare two versions of a notebook, they almost certainly want to see a plain-text diff, not a JSON blob. Pairing with Jupytext gives them the best of both worlds: the reviewer reads the `.py`, the analyst keeps using the `.ipynb`, and the two stay in sync automatically. For notebooks that are handed in once and never reviewed, this is overkill; for notebooks that are part of a shared project, it is almost always worth the small setup cost.

## Stakes and politics

The choice between a notebook and a script is presented in this chapter as a technical trade-off, and it is. It is also a cultural one. Notebooks are the dominant idiom in academic data science, scientific computing, and teaching; scripts are the dominant idiom in software engineering, production systems, and any code that runs without a human watching. Two consequences worth naming.

First, *what counts as "professional" code*. Hiring panels for data-engineer or ML-engineer roles often treat notebook-only portfolios as a yellow flag, even when the analytical work is excellent — the implicit norm is that "real" code is `.py` files in a Git repo with tests and a `main()`. The norm is not arbitrary (scripts are easier to review, schedule, and reuse), but it does mean that the same skill applied in two formats reads as two different levels of seriousness. Second, *which community gets to claim a piece of work*. A study published as a clean notebook with figures inline reads as a research artifact; the same code restructured into `src/` with command-line flags reads as software. Funders, reviewers, and tenure committees treat the two differently, and the conversion has costs — time, learning curve, infrastructure access — that fall unequally on early-career researchers, students, and people without a software-engineering mentor.

See @sec-artifacts-politics for the broader framework. The concrete prompt to carry forward: when you choose between a notebook and a script, name who the audience is and what they will read your code as — and when you review someone else's, do the same.

## Worked examples

### Turning notebook code into importable functions

Your notebook has the same five lines of cleaning code in three different cells: lower-case the column names, parse the date column, drop rows with no customer id. Time to extract them.

Create `src/cleaning.py`:

```python

# src/cleaning.py

import pandas as pd

def clean_sales(df: pd.DataFrame) -> pd.DataFrame:

df.columns = df.columns.str.strip().str.lower()

df["date"] = pd.to_datetime(df["date"], errors="coerce")

return df.dropna(subset=["customer_id", "date"])In the notebook, replace those three repeated cells with a single import and call:

from src.cleaning import clean_sales

df = clean_sales(pd.read_csv("data/raw/sales.csv"))Now there is exactly one place where the cleaning logic lives. Improvements to it apply everywhere automatically.

Writing a CLI script around your functions

Now turn the same cleaning logic into something you can run from the command line on any input file:

# scripts/run_cleaning.py

import argparse

from pathlib import Path

import pandas as pd

from src.cleaning import clean_sales

def main():

p = argparse.ArgumentParser()

p.add_argument("--input", type=Path, required=True)

p.add_argument("--output", type=Path, required=True)

args = p.parse_args()

df = pd.read_csv(args.input)

cleaned = clean_sales(df)

cleaned.to_csv(args.output, index=False)

print(f"wrote {len(cleaned):,} rows to {args.output}")

if __name__ == "__main__":

main()Run it like any script:

python scripts/run_cleaning.py \

--input data/raw/sales.csv \

--output data/processed/sales_clean.csvThe cleaning function is unchanged, the notebook is unchanged, and you now have a third way to invoke the same logic. Parameter changes are command-line flags rather than code edits.

Debugging “it imports in the terminal but not in the notebook”

You can from src.cleaning import clean_sales from python at the terminal, but the same line in your notebook fails with ModuleNotFoundError: No module named 'src'. The cause is one of two things, and the diagnostic is one cell:

import sys, os

print("python:", sys.executable)

print("cwd: ", os.getcwd())

print("path[0]:", sys.path[0])If python is not your project’s venv interpreter, the kernel is wrong — switch kernels or register a new one (see Chapter 16). If cwd is not the project root, you are running the notebook from a subdirectory and Python cannot find src/ from there — move the notebook, or cd into the project root before launching Jupyter, or add the project root to sys.path explicitly. Either way, the diagnostic cell tells you which problem you have within seconds.

Converting a notebook to a script and refactoring

The standard tool for this is jupyter nbconvert, which produces a .py file from a notebook in one command:

jupyter nbconvert --to script notebooks/analysis.ipynb \

--output ../scripts/analysisThe result is rarely a clean script on its own — it has every cell concatenated, including the interactive df.head() calls that were just for inspection, the cells you wrote and then commented out, and any markdown cells (which become code comments). The conversion is the starting point of a refactor, not the finished product. From there, walk through the file and remove anything that was only there for interactive inspection, extract repeated logic into functions, and wrap the whole thing in a main() and if __name__ == "__main__": block. The end product is a real script you can run unattended; the original notebook stays for narrative use.

Parameterizing a notebook with papermill

Sometimes you want the notebook to be parameterized rather than rewriting it as a script. The papermill tool lets you tag a cell as the “parameters” cell and then execute the notebook from the command line with different values injected. In the notebook, put a code cell at the top with the cell tag parameters:

# parameters

input_file = "data/raw/sales.csv"

output_file = "data/processed/sales_clean.csv"Then from the command line, execute the notebook with whatever parameters you like:

papermill notebooks/analysis.ipynb outputs/analysis_q3.ipynb \

-p input_file data/raw/sales_q3.csv \

-p output_file data/processed/sales_q3_clean.csvpapermill writes a new copy of the notebook with the executed outputs and the new parameter values baked in. This is the right tool when you want to keep the notebook narrative form but run it many times — for example, generating one report per month from the same template.

17.9 Templates

Template A: Minimal script skeleton

"""One-sentence purpose.

Inputs:

Outputs:

How to run:

"""

from pathlib import Path

def main(...):

...

if **name** == "**main**":

main(...)Template B: Minimal CLI skeleton (conceptual)

import argparse

def parse_args():

p = argparse.ArgumentParser(...)

p.add_argument("--input", required=True)

p.add_argument("--output", required=True)

return p.parse_args()

def main():

args = parse_args()

...

if **name** == "**main**":

main()Template C: Notebook that calls scriptable functions

# 1) Purpose + imports

# 2) Parameters (as variables)

# 3) Call functions from src/

# 4) Save outputs

# 5) Interpretation

# 6) Restart-and-run-all proof17.10 Exercises

Write a script that loads a CSV and prints a short summary (rows, columns, missingness).

Refactor the script so the summary logic is in a function and the script is only an entry point.

Import that function into a notebook and use it on two datasets.

Add a CLI with

–inputand–outputflags.Convert a notebook to a script and identify at least three hidden-state problems you had to fix.

Write a short paragraph explaining whether your project should be a notebook, a script, or a hybrid—and why.

17.11 One-page checklist

My script has a

main()and does not run heavy work on import.Reusable logic lives in importable functions/modules.

I can import my own code into notebooks and reload changes when iterating.

I can run the same analysis via a CLI with parameters.

I know how to convert notebooks to scripts and use conversion to improve reproducibility.

I choose notebooks for narrative/exploration and scripts for repeatable/automated runs.

17.12 Quick reference: common tools (conceptual)

Notebook conversion to scripts and reports.

Paired notebook formats for better diffs.

Parameterized execution of notebooks for batch runs.

- Python docs,

argparsetutorial — the canonical walk-through for building command-line interfaces in the standard library. - Python docs,

if __name__ == "__main__"— the official explanation of the import-vs-script entry point pattern. - Pallets, Click documentation — the most widely used third-party CLI framework; useful when

argparsestarts to feel verbose. - Sebastián Ramírez, Typer documentation — a modern alternative to Click built on Python type hints; the lowest-friction way to add a CLI to an existing function.

- Real Python, Command-Line Interfaces in Python — a longer-form treatment with subcommands, validation, and worked examples.

- Jupytext documentation — the canonical tool for keeping a

.ipynband a.pyin sync, which makes notebooks reviewable in plain text. - Python Packaging Authority, Packaging Python projects: src vs flat layout — the standard discussion of how to organize a project once

src/enters the picture.